A few months ago, I attended an education technology conference in New York where the main topic, over and over again, was artificial intelligence. I left New York with a lot of concerns about AI and its impact on education. That was awkward, because we were in the middle of developing our own software backbone for environmental education content, and I came back wondering whether we were on the wrong track.

My concerns about AI in general, and large language models (LLM) specifically, were threefold.

First, they can be flat-out wrong, and confidently so. Korea, for example, moved early on AI in education, but later had to pull AI-generated textbooks off the shelf because of factual errors. That is an expensive and embarrassing failure, and exactly the kind of thing educators worry about.

Second, in most of the tools I saw, AI was being used to bypass the people who should still matter most: educators, administrators, parents, and peers. The presenters at that conference were excited about AI, but most of the educators in the room were a lot less convinced that it was going to fix education just because the presenters said so.

The third concern is dependency. Right now we are living in the golden age of LLMs, when they still seem magical, fast, and cheap. That will not last. Soon enough they will be cluttered with ads, biased content, distortions, and incentives that have nothing to do with education. This "enshittification" is inevitable and the question is whether that breaks any tool designed for education.

So we took a step back.

As an education technology company with an active AI development program, we had to ask ourselves whether what we were building still fit what educators and students actually needed. We called everyone. We demoed. We talked. We went back to conferences. We read blogs and publications. In other words, we did another full round of market research in mid-flight during a tight development schedule.

What came back was actually pretty clear, first, the core of our program still held up. Educators do want tools that make environmental STEM easier to set up and more personal to their students. They were not rejecting AI outright. What they objected to was losing control. They were fine with an AI element as long as they remained in charge of the actual content that goes out to students.

The second issue was trickier. Relevance.

AI is very good at producing polished general content. It is much worse at knowing what matters to a particular teacher, in a particular class, in a particular place. It does not know whether a class cares more about frogs than fish. It does not know whether it rained all week or not at all. It does not know why the creek behind the school matters, while some generic lake in a stock photo does not. That is how educational content becomes flat, even when it sounds polished.

That led us to combine the two ideas, on one side, we built a system that can generate environmental science content tailored to different learners, whether they are 5th graders or adult volunteers in a citizen science program. On the other side, we built in local relevance from the start.

Educators retain full control. They are the authority on what goes to students, what gets edited, what gets rejected, and what gets redone. No educator, no content.

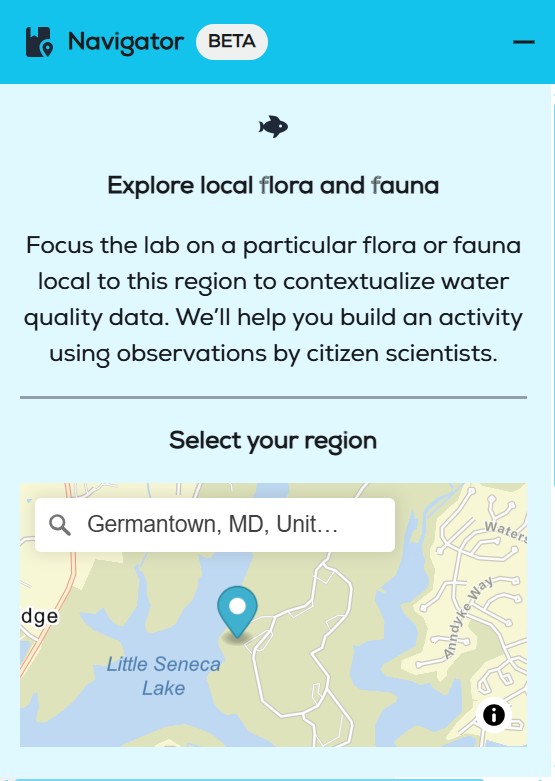

The new piece is that the educator can also anchor that content in a place that actually matters to the learners. It is one thing to talk about water quality through an abstract diagram or textbook / AI picture. It is another thing entirely when the subject is the pond by the school, the creek behind the soccer field, or the shoreline students actually know. Letting the educator choose a real body of water, a park, or a meadow that matters to their students makes the content personal in a way generic AI never will.

The US Department of Education (who funds our work) has a thoughtful breakdown of the process how AI tools will integrate into the classroom in iterations that improve over time. Their "Stage 4" of adoption is described as:

"Reinvention is the stage where education is reimagined, not just improved. AI is treated as a creative partner, enabling things we couldn’t do before. Here are just a few:

We did not invent place-based learning. But we think we found a way to use AI without stripping out the place, the teacher, or the point. That gets us to Stage 4, we think.

So here is what we built:

We built a companion system for our tried and proven Lab Builder. Lab Builder already allows educators to use a web form to build an educational lab that combines background information, instructions for using our water quality sensors, and closed quizzes and surveys.

Our new Navigator builds on that, but adds a very important new layer.

Navigator helps educators create place-based content by pulling in animals, plants, and algae that were reported at a selected location by users of iNaturalist, an amazing citizen science platform and resource with global reach and a strong peer review culture for accuracy. That matters, because the organisms suggested to the teacher are not AI slop or hallucinations. They are real observations, tied to a real place, with scientific value and public accountability.

We take that local ecological information and combine it with the educator’s goals, grade level, reading level, language preferences, class size, and other settings. Then we use AI to generate draft text, quizzes, or surveys. Full disclosure, we don't train our own AI models, or in some form alter, modify or even change iNaturalist's content. We just use it to help the educator pick a plant or animal that they know resonates with their class. Then we build the content and display it for approval.

That is how we address the accuracy problem and the relevance problem at the same time.

The content is grounded in real observations from iNaturalist. It is shaped by the teacher’s goals. The AI helps assemble and adapt it, but it does not operate on its own and it does not get the final say. The teacher reviews everything, edits everything, rejects what is not useful, and stays in control from beginning to end.

We never take the educator out of the loop.

So yes, we think we have built a tool that uses AI in the service of environmental science, but with clear guardrails, local relevance, and a straightforward way for educators to check, edit, and control what goes to students. That is why I am comfortable with it. It does not need to perform as “AI.” It just needs to do its job well.

On a personal note, I think we did the right thing, we paused a (for us) big development program in mid-flight to see if we still were on the right track. We realized that we were fairly close but not perfect and we adjusted to the new insights. That only worked because we are supported by amazing teachers nationwide who believe that we are trying to do the right thing and are always willing to jump on call.

To all teachers - Thank You! You rock!

If you want to know more, get in touch and we will show you a full demo. Try it out. I think you will like it as much as we do.